nonlinearity, why it makes sense to think big

The world is nonlinear. Most outcomes worth wanting do not cost proportionally more effort, they cost different effort. And once you accept that, Richard Hamming's 1986 lecture on doing important research stops sounding like advice and starts sounding like a corollary.

Note (2026, expanded): This is the closest thing to a thesis I have for this blog, and I keep coming back to it. The original argument from 2014 is unchanged. I’ve added a final section, From physics to behavior, that connects it to Richard Hamming’s 1986 lecture You and Your Research, which I think of as the behavioral corollary to the same observation.

Thinking big is usually sold as an attitude, be ambitious, dream larger, push harder. What actually convinced me of it, years ago, was not a motivational poster but a set of observations from physics and statistics: most of the systems we live inside are nonlinear, and once you start noticing that, “think big” stops being a slogan and starts being a straightforward consequence of how the world works.

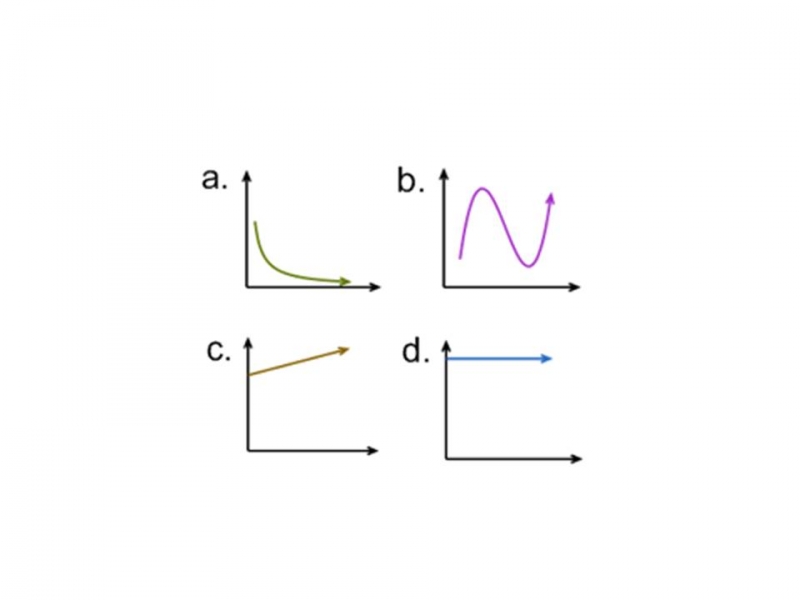

A nonlinear system is one where the output is not directly proportional to the input. Double the effort, and you might get ten times the result, or almost none. Most of the interesting outcomes in life live far away from the middle of that curve.

Once you look for it, nonlinearity is almost everywhere:

- Income and wealth follow a Pareto distribution, a small fraction of people hold most of the total.

- City sizes, word frequencies, and citation counts follow power laws (Zipf).

- Compound interest quietly turns small sustained deposits into outsized balances over decades.

- Neural networks get disproportionately better as you scale parameters, data, and compute together, the scaling laws that shaped the last decade of AI.

- Viral content and open-source projects follow winner-takes-most dynamics: most die quietly, a few eat the world.

- Software bugs and security incidents: a small number of them account for most of the damage.

The common thread is that effort-to-outcome is not a straight line. Some work compounds. Some work distributes. Some work unlocks an asymmetric payoff. And a lot of work does none of those things, it just cancels out noise.

The practical question, then, isn’t “how much can I work?”, it’s “what kind of work sits on the steep part of the curve?” A few heuristics I keep coming back to:

- Does this compound? Will tomorrow’s version be built on top of today’s, or will I be starting from scratch again?

- Does this distribute? Can one unit of effort reach ten people, a thousand, a million?

- Is the payoff asymmetric? Small downside, large upside, a classic option.

- Am I solving a rare problem or a common one? Rare problems concentrate payoff; common ones concentrate competition.

None of this is a recipe for success. But it does make “think big” feel less like a pep talk and more like a reasonable response to the shape of the world. If the system is nonlinear, then the biggest outcomes don’t cost proportionally more effort, they cost different effort. And if you’re going to pick something to spend years on, you might as well pick something that sits on a steep part of the curve.

From physics to behavior

The heuristics above tell you what to look for. They don’t tell you how to behave so steep-curve work actually finds you. The clearest answer I know to that second question came from Richard Hamming, in a 1986 lecture titled You and Your Research. Hamming had spent thirty years at Bell Labs, sharing an office with Claude Shannon, watching which scientists changed entire fields and which equally smart, equally hardworking colleagues retired forgotten. He wanted to know what the difference actually was. His answer was a short list of habits, and read in this light, each one is the behavioral shadow of one of the heuristics above.

Compounding. Hamming’s most uncomfortable claim is that someone who works ten percent harder than you does not produce ten percent more over a career. They produce roughly twice as much. The gap doesn’t add, it multiplies, and it compounds quietly for years before anyone notices. That sentence is just the compound-interest curve restated as a career. It is also the reason most people underrate consistency: the daily delta is small enough to dismiss, and the integrated delta is large enough to be life-changing.

Distribution, the open door. Hamming noticed that scientists who kept their office doors closed got more done in the short term, because nothing interrupted them. But the scientists who kept their doors open won over a career. They were interrupted constantly, and they absorbed every idea that walked past. Ten years in, they were working on problems the closed-door people did not even know existed. Distribution doesn’t only run outward (one unit of effort reaching many people), it also runs inward (many ideas reaching one person). Both are the same nonlinearity: you are building a network whose value scales superlinearly with how many edges connect to it.

Asymmetric payoffs, by inversion. When Bell Labs refused to give Hamming the team of programmers he wanted, he sat with the rejection, then flipped the question: instead of asking for people to write programs, why not ask why machines could not write programs themselves? That single inversion pushed him into a frontier of computer science. The pattern repeats: what looks like a defect, flipped correctly, is the asymmetric option you were looking for. Small downside (the original rejection still stands), large upside (an entire field). Inversion is the technique that makes asymmetric payoffs visible at all.

Rare problems, the important ones. Hamming’s most quoted line is that if you do not work on an important problem, it is unlikely you will do important work. He was unsentimental about why most scientists avoid them: the odds of failure are high, and a safe adjacent problem publishes faster. But avoidance is exactly what makes important problems rare in his sense, and rare problems concentrate payoff. The crowd’s exit is the steep part of the curve.

He closed the lecture with a line about Pasteur, that luck favors the prepared mind, and he meant it more literally than it usually gets read. You don’t hope for luck. You arrange your days so that, when luck does show up, it lands on a surface where it can compound. Open door. Important problem. Inverted question. Compounded hours. Those are not traits, they are choices.

That is the move I want to leave with. The world is nonlinear, so the interesting question stops being “how much can I work?” and becomes “what arrangement of my days has steep-curve outcomes as a possibility at all?” Most days you cannot tell the two arrangements apart. Over a career, they are not the same life.

Further reading

- Richard Hamming, You and Your Research (1986 transcript, University of Virginia).

- Richard Hamming, The Art of Doing Science and Engineering (Stripe Press reprint, 2020). The lecture is the final chapter; the rest of the book is the same mind applied to the rest of the discipline.